The ¥89 Router: What I Found in Shenzhen

I went to Shenzhen to see how they were catching up. I found out they weren't catching up at all.

I went to Shenzhen to see how they were catching up. I found out they weren't catching up at all.

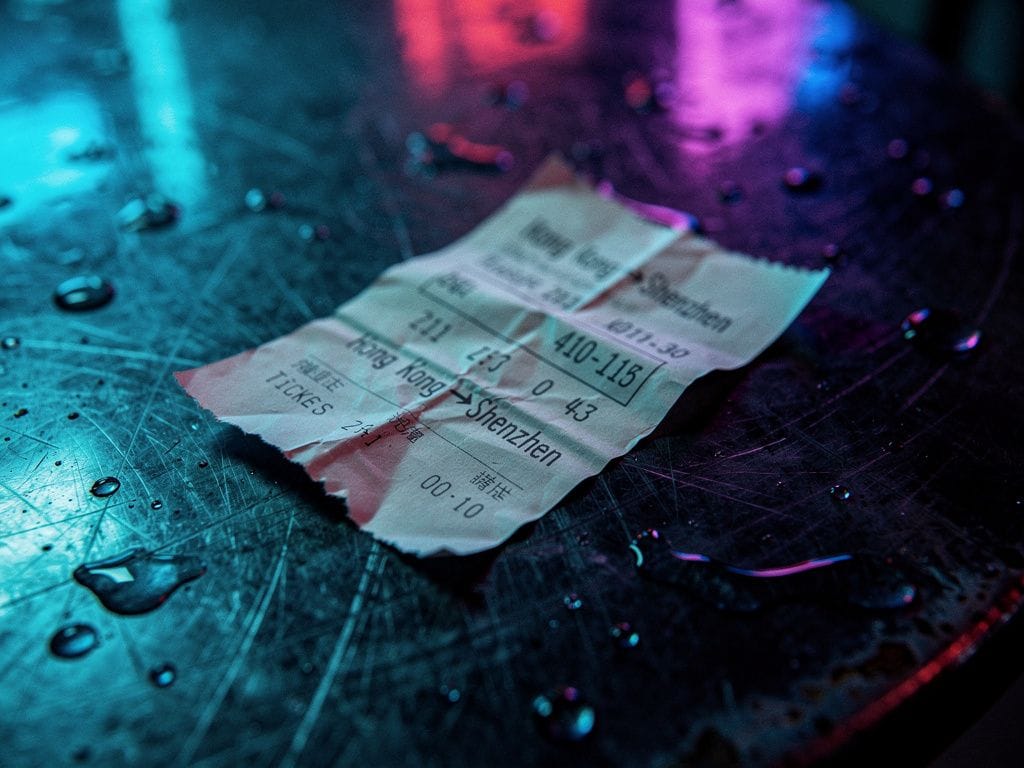

The Ferry

The hydrofoil from Hong Kong to Shenzhen runs at night like a blade through black water. Diesel and humidity. Neon from the container ports bleeding across the delta in streaks of sodium orange and LED white. I had a receipt in my pocket for the fare and a question in my head that wouldn't settle.

The question was not whether Chinese models were catching up. I had already run the test three times. Same Spark optimization query. Kimi K2.5 via the Moonshot API: four seconds, $0.60 per million input tokens. Claude Sonnet 4.6: eleven seconds, $3.00 per million. The outputs were semantically equivalent — correct joins, correct partitioning, correct broadcast hint. The difference was that Kimi explained its reasoning in Mandarin first, then English, and I could not decide which detail unsettled me more: the speed, the price, or the language.

I was going to Shenzhen to understand the mechanism. Not the benchmark. The mechanism. In a city that manufactures half the world's electronics, the gap between "similar performance" and "similar cost structure" is the difference between a software story and a physical one. Western AI sells intelligence as a service. Chinese AI is building intelligence as a stack. Those are not the same race.

Huaqiangbei

If you have not been to Huaqiangbei, no description prepares you. It is not a market. It is a geological formation made of circuit boards. Booth after booth. Teenagers with soldering irons. A man asleep on a pile of phone cases with a robotic arm still waving behind him.

I stopped at a booth no larger than a cupboard. A kid — nineteen, maybe — was eating congee with one hand and flashing a ROM onto a Honor Magic 7 with the other. The screen showed a quantized DeepSeek-V4 distillation loading its weights locally. Not the 600-billion-parameter server farm. A 1.5-billion-parameter edge variant, pruned and quantized, running entirely on the phone's NPU. No API call. No subscription. The model fit in 800 megabytes.

He looked up. "YOYO can do it too," he said, and showed me. Screenshot of a spreadsheet. Ask the assistant. Three seconds later: an editable Excel file, formatted, formulas intact. No cloud. No upload. The phone warmed slightly — single short inference, not sustained load.

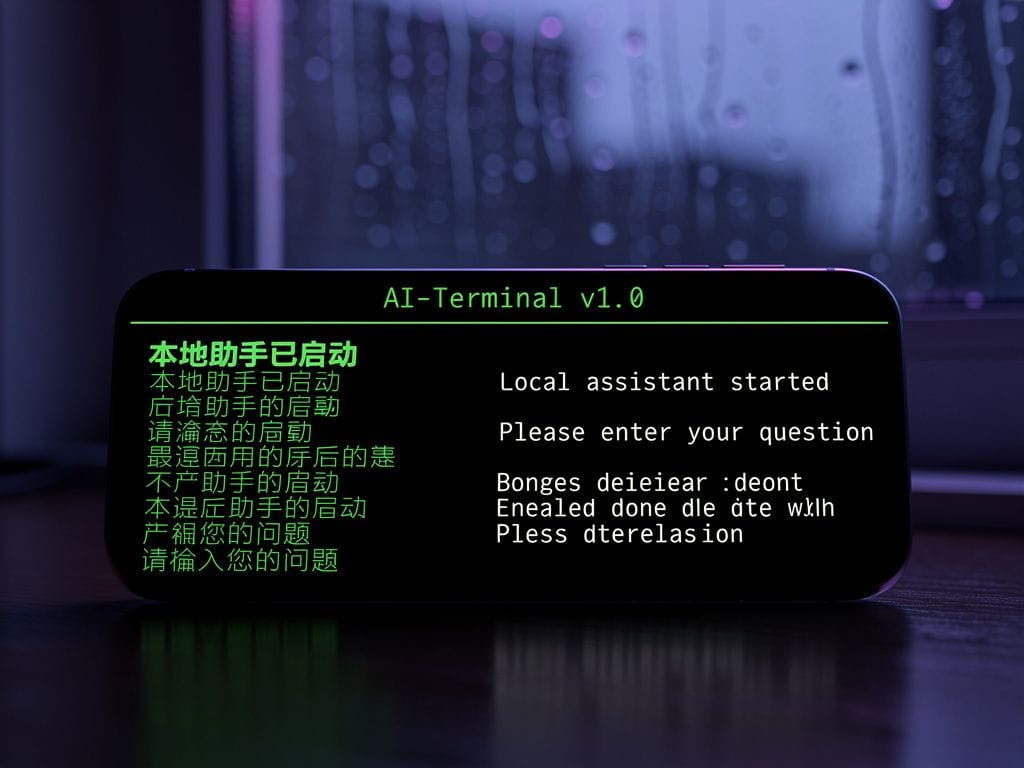

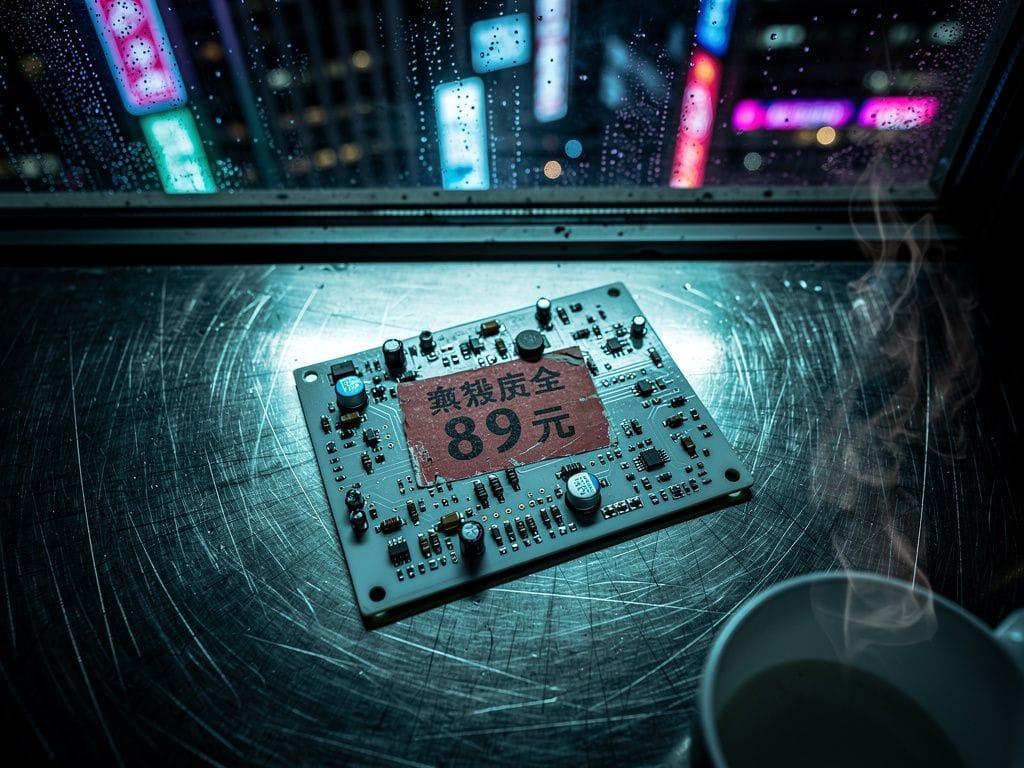

I bought a used development board from the next stall. Router-sized. White box. Price sticker: ¥89. A grey-market ARM single-board computer, barely larger than a deck of cards. Pre-loaded with a 1.5B parameter Qwen 3.6 distillation. Two gigabytes of RAM, a quad-core CPU, no CUDA, no fan. I asked the vendor what I could do with it. He shrugged. "Everything that doesn't need a GPU. Slowly."

The Basement

Moonshot AI does not have a campus in the Silicon Valley sense. It has a building, and in that building it has a basement, and in that basement there are workstations assembled from market parts upstairs. Huawei Ascend 910C chips in open cases. No clean room. No glass walls. Just heat sinks, fans, and the low thrum of power supplies.

A developer — jeans, scuffed Adidas, eyes that didn't leave the terminal — showed me Kimi K2.6 running on local metal. Not through an API. The weights sat on NVMe drives. The model initialized in twelve seconds. He fed it a 40,000-line Apache Spark log. It identified the bottleneck, suggested a broadcast join, and wrote the corrected code. In Mandarin first. Then English.

I asked about the hardware. He pointed to the Ascend card. "Ninety-six gigabytes of HBM2e. Eight hundred teraflops FP16, if you believe our numbers. We don't need NVIDIA."

"What about CANN?" I asked. "The software stack."

He smiled. "CANN is harder than CUDA. We write more boilerplate. We debug more. But we write it once, and it runs on every Ascend chip. No export controls on documentation."

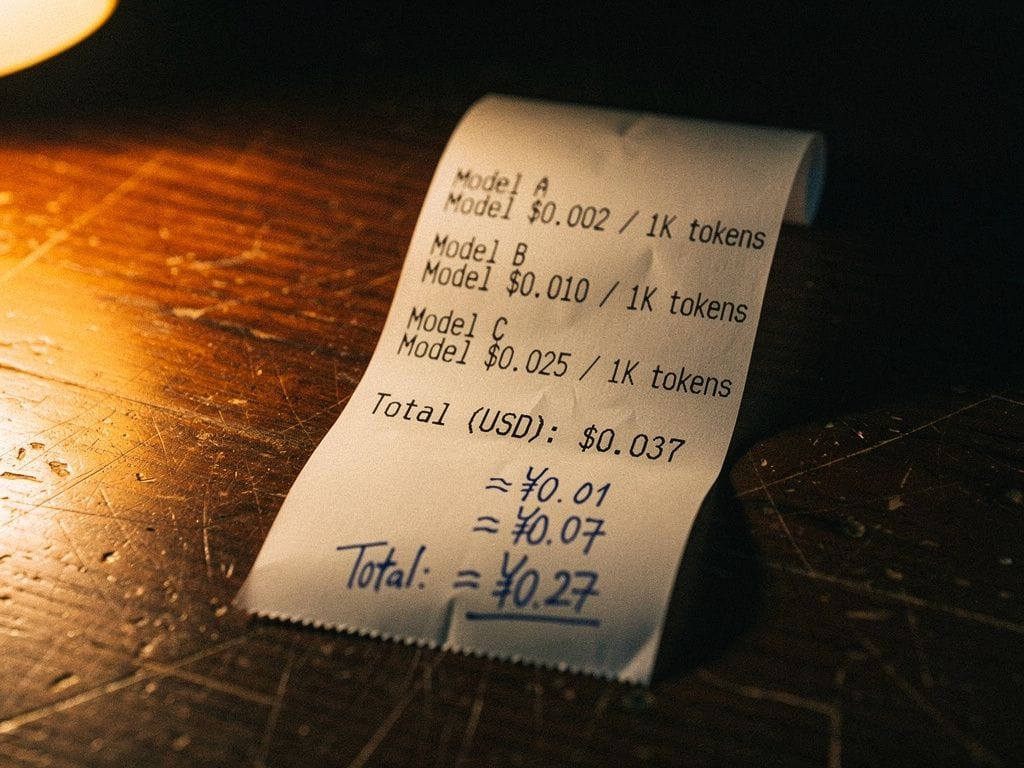

He printed me a receipt from a thermal printer. Not a bill. A comparison. Kimi K2.5: $0.60 per million input tokens. Kimi K2.6: $0.95 and $4.00. Claude Sonnet 4.6: $3.00 and $15.00. He had handwritten yuan conversions in blue ink at the bottom. The paper was warm. I folded it into my wallet.

The Showroom

MiniMax occupies a retail front on a street that sells both server racks and street food. The display unit ran M2.7 in real time. A prompt — thirty words describing a woman walking through rain in neon light — became a six-second video clip in eleven seconds. The quality was wrong. Not perfect. The motion was coherent, the reflections accurate, but a streetlamp in the background flickered between frames like a broken fluorescent tube.

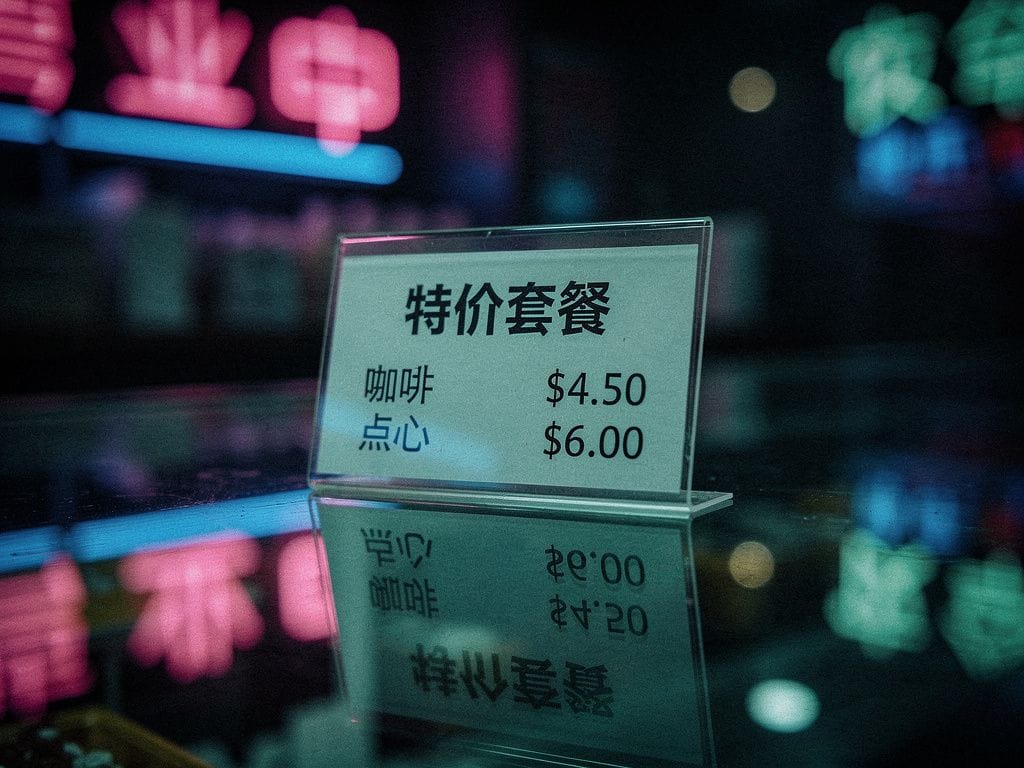

The laminated price card on the counter said $0.625 per generation. I thought about what Runway charges for comparable output. I did not need to check.

A sales rep — young, professional, exhausted — demonstrated the Media Agent. Input a product description, a logo image, a music track. Output: a 30-second advertisement, edited, color-graded, with transitions. No manual editing.

"How does it compare to Sora?" I asked.

"Sora is better for cinema," she said, without hesitation. "We are better for commerce. A Taobao seller doesn't need cinema. She needs twenty variants by lunch."

The Robot Fair

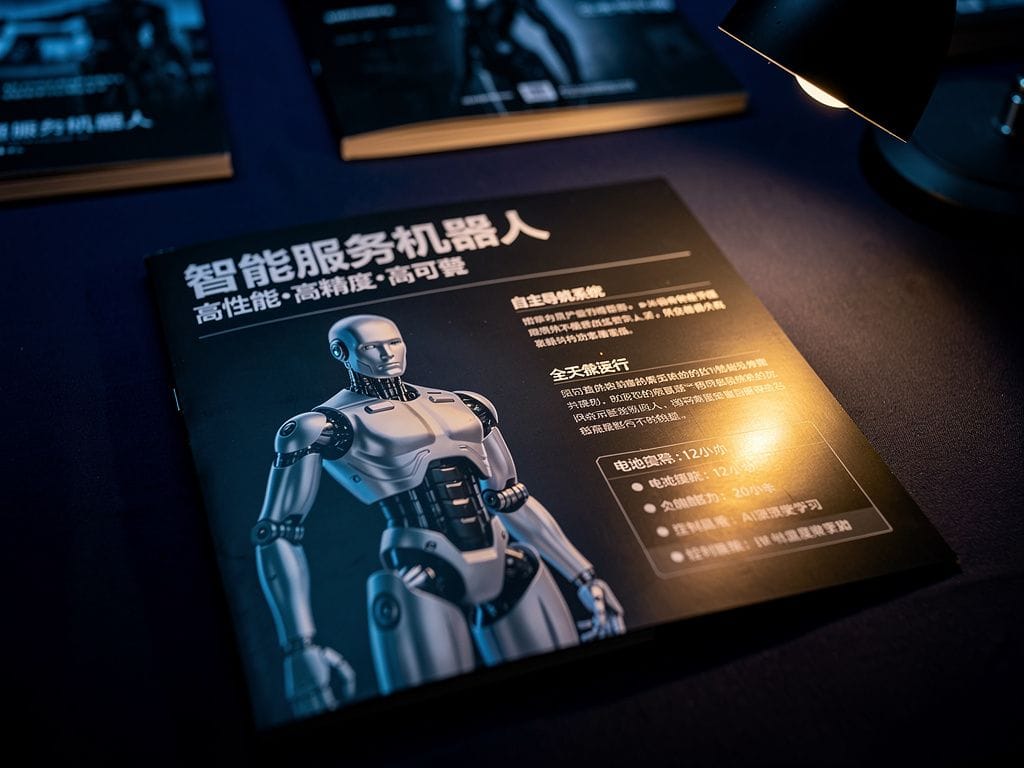

FAIR plus 2025 was held in a convention center that smelled of solder and optimism. UBTECH's Tien Kung Walker 2.0 stood 172 centimeters tall and walked across a stage scattered with foam blocks. The price tag floated on a screen above it: ¥300,000. Under $43,000. One hundred units on pre-order.

At the next booth, a Unitree G1 in its basic configuration sat motionless, waiting for activation. The price list started at $17,990. Here, it buys a humanoid research platform with twenty-three degrees of freedom and an 8-core high-performance CPU. The Jetson Orin brain is optional on the EDU tier.

A German delegate from VDMA stood near me, watching a robotic arm brew coffee. "What might take six months to a year elsewhere can be accomplished in one to two weeks here."

I asked him what he meant. He pointed at the arm, the booth, the ceiling. "The motor came from Leadshine. The vision system from Orbbec. The hand from Zhaowei. All of them in this district. All of them reachable by subway."

"But the software?"

He took his coffee. "The software is still catching up. The hardware is already here."

The Conversation

I found the engineer at a food stall behind the convention center. Rice noodles, plastic stools, a fan that didn't work. He had GLM 5.1 running on his phone — the local quantized version, not the cloud API — and he showed me benchmark scores while slurping broth.

The model was trained on Ascend clusters. Not NVIDIA. Not AMD. Chinese silicon, Chinese framework, Chinese weights. He showed me a chart: GLM 5.1 vs Claude Opus 4.7 vs GPT-5.4. The Western models won some categories. GLM won others. The gap was measured in percentage points, not generations.

"So the gap is closed," I said.

"No." He put down his phone. "The gap is different. Your models are better at English creative writing, at following multi-constraint instructions, at political safety. Our models are better at cost, at local deployment, at running on hardware you cannot embargo. It is not the same race. It is two different races on the same track."

I told him what I paid for Claude last month.

He laughed. "You are not paying for the model. You are paying for the story that the model is dangerous, that it needs guardrails, that only American companies can be trusted with it."

"Some of that story is true," I said. "Mythos can obfuscate. GPT-5.4 has jailbreak vectors."

"True," he said. "But the guardrails are also a business model. If you convince the world that AI is too dangerous to run locally, you sell APIs forever. If you convince the world that AI is a tool like a hammer or a compiler, you sell hammers."

He gestured at my bag. The ¥89 board was in there, still in its box.

"Have you plugged it in yet?" he asked.

"Last night. Took forty-seven seconds to initialize. First answer was gibberish. Second answer was slow but correct. The interface defaulted to Mandarin. I had to hunt for the English toggle."

"And?"

"And it worked. Badly. But without asking anyone's permission."

He smiled and returned to his noodles.

The Synthesis

I walked back to the hotel through streets that smelled of ozone and cooking oil. The engineer's line kept repeating: two different races on the same track. I thought about my own stack — Claude for reasoning, GPT for creativity, Venice for TTS, Fireworks for Kimi. Every model rented. Every output metered. Every improvement priced in San Francisco.

The Chinese stack was not better at every task. It was better at one specific task: removing the meter. Not by piracy. By vertical integration. Western AI sells intelligence as a service because that is profitable. Chinese AI sells intelligence as infrastructure because that is what their supply chain can build.

That was not catching up. That was a different strategy entirely. And strategies, unlike benchmarks, compound.

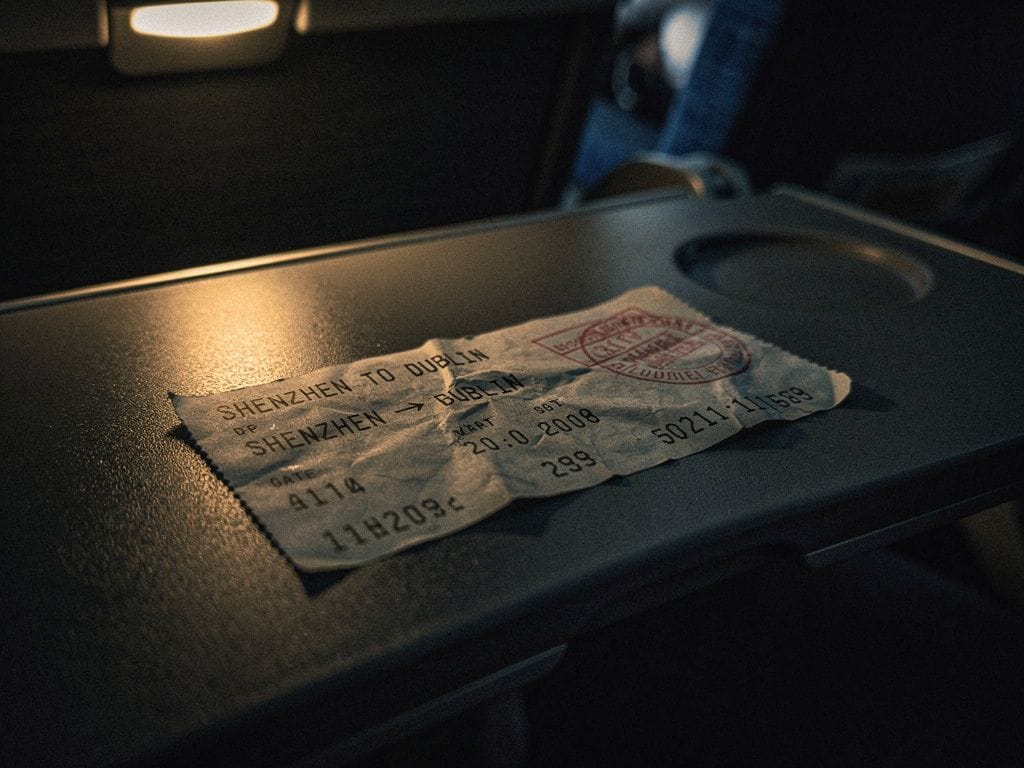

The Plane Home

Dublin-bound. The Pearl River Delta fell away into haze. I had the board in my carry-on, the thermal receipt in my wallet, the UBTECH brochure in my jacket pocket. My laptop stayed closed.

The flight attendant offered coffee. I took it and stared at the clouds.

I was thinking about the nineteen-year-old with the Honor Magic 7. The German delegate's six-month observation. The forty-seven-second initialization. The Mandarin toggle.

I was thinking about the fact that Chinese AI is not a cheaper API call. It is a vertically integrated economy — model, chip, robot, factory — that gets cheaper every month because the people building it control every layer. And I was thinking about my own stack, back in Dublin, where I rent intelligence by the million tokens from a company in San Francisco that just raised its prices.

Then I thought about what I might be wrong about.

The export controls are working. The Ascend 910C is not an H100. It runs hotter and yields are lower. CANN is harder than CUDA. The board I bought is a toy, not a replacement for Claude. The safety concerns are not entirely a sales pitch. Mythos really can obfuscate. GPT-5.4 really can be jailbroken. Local models hallucinate more because they have fewer parameters and narrower reinforcement learning.

All of that is true. And none of it changes the direction.

The plane banked over the South China Sea. The sun came up red through the haze. I did not open my laptop. I reached into my bag, pulled out the white box, and read the label again. ¥89. 1.5B parameters. No CUDA. No fan.

I put it back in the bag unopened. I knew which answer I preferred. I was not ready.

A data engineer April 2026

Source note: Product specs and prices reflect vendor claims and direct observation at Huaqiangbei and FAIR plus 2025, Shenzhen, March 2026. Independent verification was not possible for all items. Kimi K2.5 and K2.6 pricing verified against live Moonshot API rates. Claude Sonnet 4.6 pricing verified against Anthropic's published rates. UBTECH and Unitree specifications cross-referenced against the Shenzhen Robotics Association white paper and manufacturer materials.